Change Point Detection

Overview

Apache Otava implements a nonparametric change point detection algorithm designed to identify statistically significant distribution changes in time-ordered data. The method is primarily based on the E-Divisive family of algorithms for multivariate change point detection, with some practical adaptations.

At a high level, the algorithm:

- Measures statistical divergence between segments of a time series

- Searches for change points using hierarchical segmentation

- Evaluates significance of candidate splits using statistical hypothesis testing

The current implementation prioritizes:

- Robustness to noisy real-world signals

- Deterministic behavior

- Practical runtime for production workloads

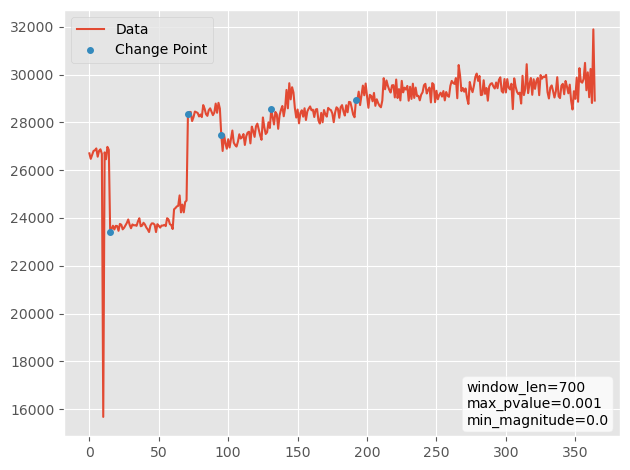

A representative example of algorithm application:

Figure 1. An example of running compute_change_points function on Tigerbeetle dataset. The parameters for the compute_change_points function are displayed in the bottom right corner. Here the algorithm detected 5 change points with a statistical test showing that behavior of the time series changes at those points. In other words, the data have different distributions to the left and to the right of each change point.

With that being said, the difference could be merely a change in the mean, but the algorithm isn't restricted to that and could also detect other kinds of changes, such as a change in variance, skewness, and other shifts in the overall shapes between the distributions even if the mean stays constant. Once potential change points are identified, a statistical test is performed to validate which of the points are in fact change points. That is to say, they are statistically significant. The user gets to choose the threshold (also called p-value threshold or significance threshold) used for the statistical test. The threshold adjusts the balance between finding fewer change points and finding false positives. For example, a p-value threshold of 0.01 roughly means that there will be at most 1% false positives. The false negatives are harder to estimate and depend on both the chosen threshold and the actual data distribution.

Technical Details

Main Idea

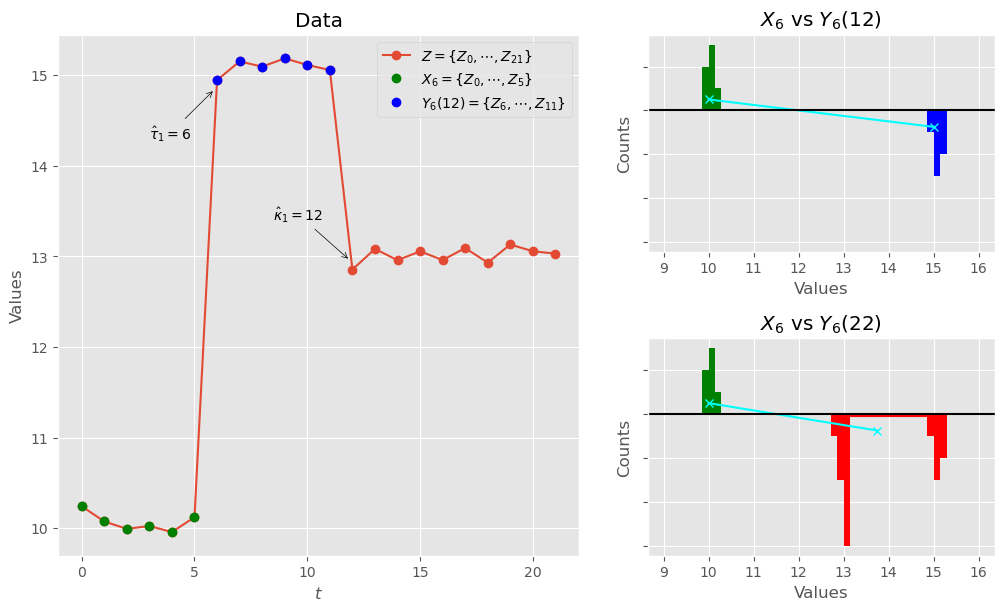

The main idea is to use a divergence measure between distributions to identify potential points in time series at which the characteristics of the time series change. Namely, having a time series (which may be multidimensional, i.e. from with ) we are testing non-empty subsequences and for all possible to find such that maximize the probability that and come from different distributions. If the probability for the best found is above a certain threshold, then the candidate is a change point. The process is repeated recursively to the left and to the right of until no candidate corresponds to a high enough probability. This process yields a series of change points . See an example in Figure 2.

Figure 2. On the left subfigure, there is a time-series in red color, with two subseries in green and in blue. Among all possible consecutive subseries, the subseries and have the highest probability to come from different distributions, i.e., being the most distinct. These subseries are defined by the pair . If the difference between these subsequences is big enough (above the significance threshold), then is a change point, and the algorithm will continue to the next step with the next best pair being . Note that the defines the end of the subseries , which might be before the end of series , i.e., . The reason can be seen on the right subfigures. Specifically, it shows that if we restrict the subseries so that it does not go all the way to the end of series , the algorithm might detect the changes in time series better. Consider a loosely defined distance between the distributions (cyan) in the top right subfigure vs and in the bottom right subfigure vs . The tail in muddies the signal, compared to the truncated series .

There are a couple of additional nuances and notes that we want to mention:

- According to the provided definition, the change point is the first point of the second subsequence, and, because both subsequences must be non-empty, the change point cannot be the first point of the whole sequence , i.e., point .

- There exists an alternative way of defining the change point (as the last point of the first subsequence - in that case, the last point cannot be the change point). And, in fact, some of the papers cited here are using that other definition. However, Apache Otava uses the term "change point" as defined above, i.e., the change point is the first commit at which metrics change.

- In the example in Figure 2 we effectively used distance between means as a distance between the distributions. It's an oversimplification for illustrative purposes, and in reality we actually use a divergence measure between multivariate distributions. See Original Work section for more details.

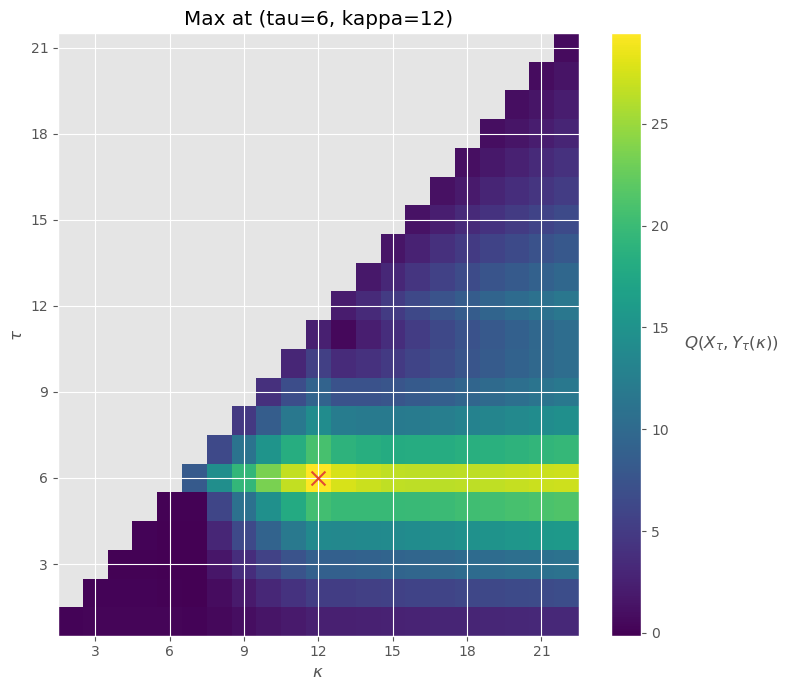

- In the example in Figure 2, we compared only two pairs of the values , namely and . The algorithm actually checks for all valid values before choosing the best one. See Figure 3.

Figure 3. Divergence measure between distributions of and for all valid values of . The biggest number corresponds to the most promising pair of values (marked by a red cross).

Original Work

The original work was presented in "A Nonparametric Approach for Multiple Change Point Analysis of Multivariate Data" by Matteson and James. The authors provided extensive theoretical reasoning on using the following empirical divergence measure:

where , usually we take ; is Euclidean distance; and the coefficient in front of the second and third terms in are reciprocals of binomial coefficients. The idea behind each term in is actually quite simple. The first term of is the average of all possible pairwise distances between subsequences and . The second and third terms are the average of all possible pairwise distances of points within subsequences and , respectively. The intuition behind this divergence measure is to compare "how large" is the distance between the distributions, compared to the distances within each distribution on average. And the coefficient in front of in is a normalization coefficient that is required for some theoretical results to work.

The most "dissimilar" subsequences are given by

After the subsequences are found, one needs to find the probability that and come from different distributions. Generally speaking, the time sub-series and could come from any distribution(s), and the authors proposed the use of a non-parametric permutation test to test for significant difference between them. If the candidates are shown to be significant, the process is to be run using hierarchical segmentation, i.e., recursively. For more details read the linked paper.

Hunter Paper

While the original paper was theoretically sound, there were a few practical issues with the methodology. They were outlined clearly in Hunter: Using Change Point Detection to Hunt for Performance Regressions by Fleming et al.. Here is the short outline, with more details in the linked paper:

- High computational cost due to the permutation significance test.

- Non-determinism of the results due to the permutation significance test.

- Missing change points in some of the patterns as the time series expands.

The authors proposed a few innovations to resolve the issues. Namely,

- Faster significance test: replace permutation test with Student's t-test, that demonstrated great results in practice - This helps reduce computational cost and non-determinism.

- Fixed-Sized Windows: Instead of looking at the whole time series, the algorithm traverses it through an overlapping sliding window approach - This helps catch special pattern-cases described in the paper.

- Weak Change Points: Having two significance thresholds. Algorithm starts with a more relaxed threshold to identify a larger set of candidate change points, called "weak" change points, and then continues by re-evaluating all "weak" change points using stricter threshold to yield the final change points - Using a single threshold could have myopically stopped the algorithm. Allowing it to look for more points and filter out the "weak" ones later resolves the issue.

Apache Otava Implementation

The current implementation in Apache Otava is conceptually the one from the Hunter paper with a newly rewritten implementation of the algorithm.

Starting with a zero-indexed time series , we are interested in computing . Moreover, because we are going to recursively split the time series, the series in the arguments of the function do not have to start from the beginning of the series. For simplicity, let us fix and define the following function instead:

which is equivalent to comparing the time series slices Z[s:tau] and Z[tau:kappa] in Python notation. Next, let us define a symmetric distance matrix , with and the following auxiliary functions:

Note that and . Finally, we can use function and to define recurrence equations for . We start by rewriting the function as a sum of three functions:

Here, functions , , correspond to the average pairwise distances between and within the subsequences. See Original Work section for more details.

Note that , and , and because they correspond to empty subsequences. Moreover, and because each of them corresponds to an average pairwise distance within a subsequence consisting of a single point, i.e., each of them is the sum with zero terms. Using that, we can compute values for , , and iteratively. Note that functions and are computed as increases, and function is computed as decreases.

These formulas are effectively used in Apache Otava after some NumPy vectorizations. For details see change_point_divisive/calculator.py.